Data Mesh - A Deep Dive Introduction

Here to share my experience in the realm of expertise:

Cloud Adoption Applied AI, ML & MLOps Microservices 2.0 Digital Portfolio & Product P&L Federated Learning (Adv. AI) IaC, X-Ops, Containers, CaaS (Container-as-a-Service| Kubernetes), CaaS Governance (HELM) Digital Product UX/UI/LCNC (PWA/Micro frontend/ Low Code No Code canvass/ Self Service Interface) Distributed Computing Cybersecurity Industry 4.0 (IIoT, Intelligent Operation Platform, OT & IT) Blockchain in Data Trust Data & Digital Transformation

CONTENTS:

Introduction to data mesh

Evolution

Principles of data mesh

Deployment design patterns

Data mesh involving people, process and technology

Integrating data mesh architecture

Introduction to Data mesh

Data mesh is a Domain-oriented decentralization for analytical data and it evolves beyond the traditional centralized approach of storing data using data lakes or data warehouses.

Data mesh mainly provides organizational agility by allowing data producers and data consumers to access and manage data without using data lakes or data warehouses

This method of decentralizing the data provides data ownership to the domain specific teams so that they can own, manage, and serve the data as a product

Data mesh is not a tool instead, it's more of a philosophy or a theory that drives data architectures

Evolution:

First generation data platform: data warehouses costly good for only structured data technical debt – had to design lot of ETL pipelines

Second generation data platform: Data lakes Both for structured and unstructured data Have to very careful otherwise it will turn it data swamps (an outcome of undefined data sets from multiple sources) No ACID properties Specialised skillset

Third generation data platform: Combination batch and stream Has central storage for handling data coming from different sources like structured, semi-structured, unstructured and data is consumed by data scientists/ users/ dashboards etc Problems: time and effort required is more, not flexible

Data mesh Instead of flowing data from domains into centrally owned data lake/ platform. Domains needs to host and serve their domain datasets in easily consumable way

Benefits:

We can duplicate data in diff domain as they may have common data

Transfer data in a suitable manner like graph for some, RDBMS for some etc

Serving and pull model instead of push and ingest

Ownership of data lies to different people rather than central data team

Domain Dataset as Product is:

Discoverable (data cataloguing)

Addressable (data cataloguing)

Trustworthy

Self-describing

Interoperable

Secure

Principles of data mesh

1. Domain ownership

Data pipelines owned by teams with domain knowledge

Domains own cleansing, refinement, pre-aggregation, historization etc

Domains responsible for data governance, data lineage etc

2. Data as a product

This includes product thinking philosophy which means keep consumers in mind

Providing high-quality data

Basically, domain data should be treated as any other public API

3. Self-serve data infrastructure platform

Dedicated data platform

Provides domain-agnostic functionality, common tools

Easy to use and low maintenance

Easy to deploy repeatable patterns for common requirements

4. Federated computational governance

Global interoperability across domains

Define and use global data governance policies

Deployment design patterns

Dividing into different domains doesn’t mean having distinct database for each domain. There are Different design patterns for deploying schemas within data mesh

Co-located approach

The co-located approach places domains with different schemas under the management of a single database instance. This improves performance while combining data across multiple domains

Colocation improves efficiency due to improved query optimization and also lowers overhead ]

However, there are cases where co-located approach does not fit. For example, certain self-governing laws may require that the data created by a business unit within a country should remain in that country

Isolated approach

Data product is completely self-contained to that single domain

The schemas used with this technique are usually narrow in scope and service operational reporting requirements rather than enterprise analytics

Isolated domains suits best when there is a need for strong security or the desire for organizational independence

It is up to a software infrastructure to decide how the query execution should take place across different data sets in a co-located schema, optimization of data movement and efficiency of the query processing.

Data Mesh involving people, process, and technology

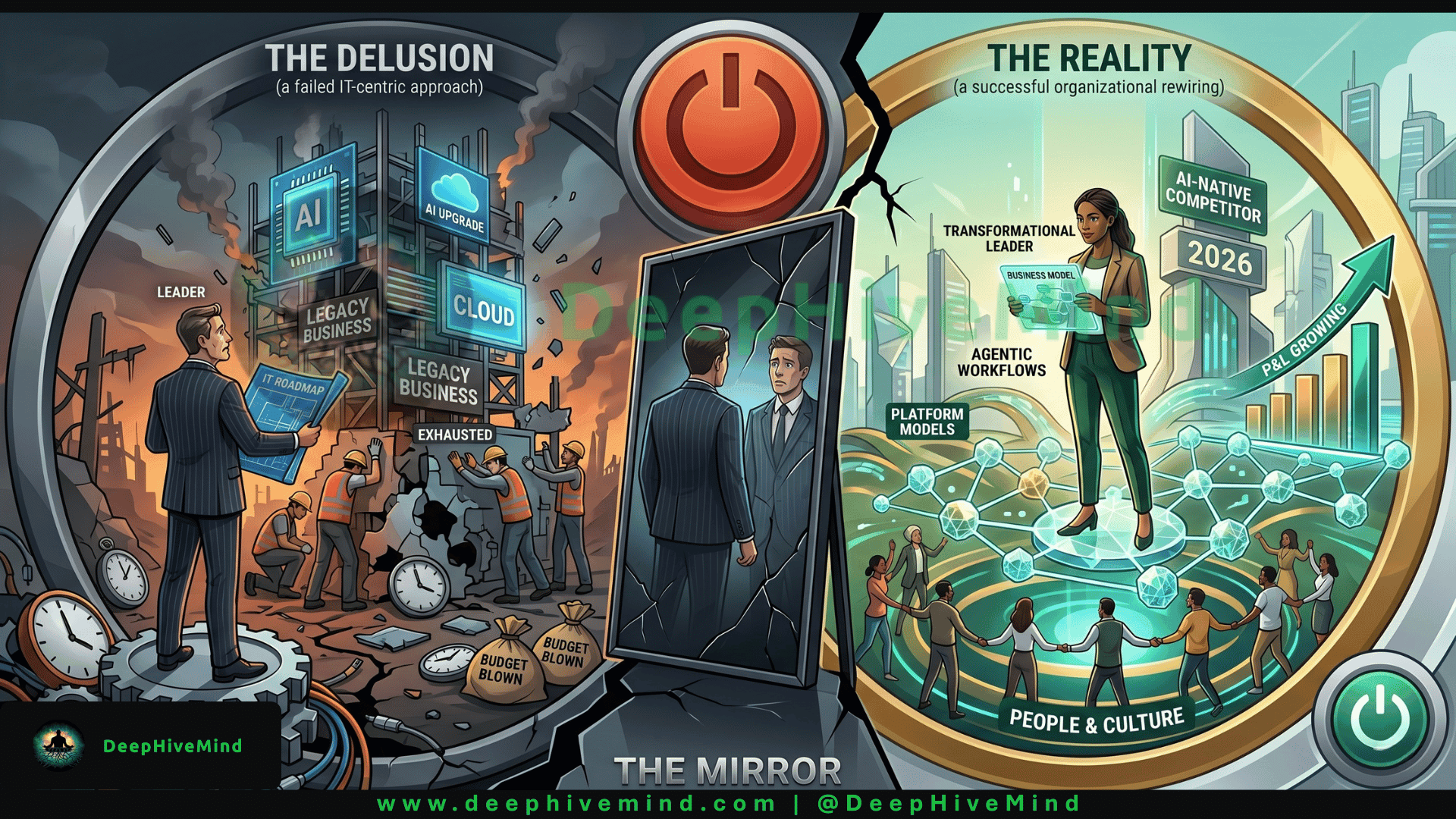

Data Mesh being a ‘socio-technical’ approach requires changes to the organization across all three dimensions which are people, process and technology. Organizations supporting Data Mesh may spend 70% of their efforts on processes and people and, 30% of their efforts on the technology.

People: from centralized data team to decentralized data domains

Starting on a data mesh journey will need some adjustments to employees’ role and also leads to organizational changes. Existing employees will need to adopt to the concept of Data Mesh, as they have less knowledge to play a part in the Data Mesh journey. Hence, we have to focus on the transition of data ownership from a centralized data team to decentralized data domains as well as a reorganization of existing employees.

Process: Organizational process changes

Data mesh implementation will need organizational process changes for agile data structures and also to promote sustainability. While considering data governance, we need to include new processes around data policy definition, implementation and enforcement which will impact the process of accessing and managing data, as well as the processes pertaining to exploiting that data as part of business-as-usual(BAU) business processes.

How to Integrate Data Mesh Architecture Into our Ecosystem

1. Connect to data sources where it resides

First step in data mesh implementation is to connect to the data sources where it resides instead of centralizing all the data first

In this approach, we are leveraging the data first and querying the data where it resides

So, Idea enables the ability to connect to data sources using connectors

2. Create logical domains

After connecting to all the data sources, next step is to create an interface for business and analytics teams to find their data. In Data Mesh terms, we call that a logical domain

It’s called logical because we are not moving data to a central repository instead, we are creating a logical place where they can log into a dashboard to see the data that’s been made available to them

So, this supports self-service where data consumers can independently do more on their own

3. Enable teams to create data products

After providing access to the data that the domain team needs, the next step is to teach them how to convert data sets into data products. Then, with a data product, create a library or a catalogue of data products

Creating data products is a powerful capability as you’ve enabled your data consumers to very quickly move from discovery to ideation as well as to insight, because we’re quickly creating and then using data products across the organization

Thank you for joining! Stay connected with the latest updates and insights by visiting my website www.DeepHiveMind.com. Don't forget to follow me on social media for more tech tips and discussions. Let's continue exploring the exciting world of technology together! #TechTalks #StayConnected