The 2026 Leadership Blueprint: How CXOs Can Engineer an AI Strategy That Delivers a Real Moat

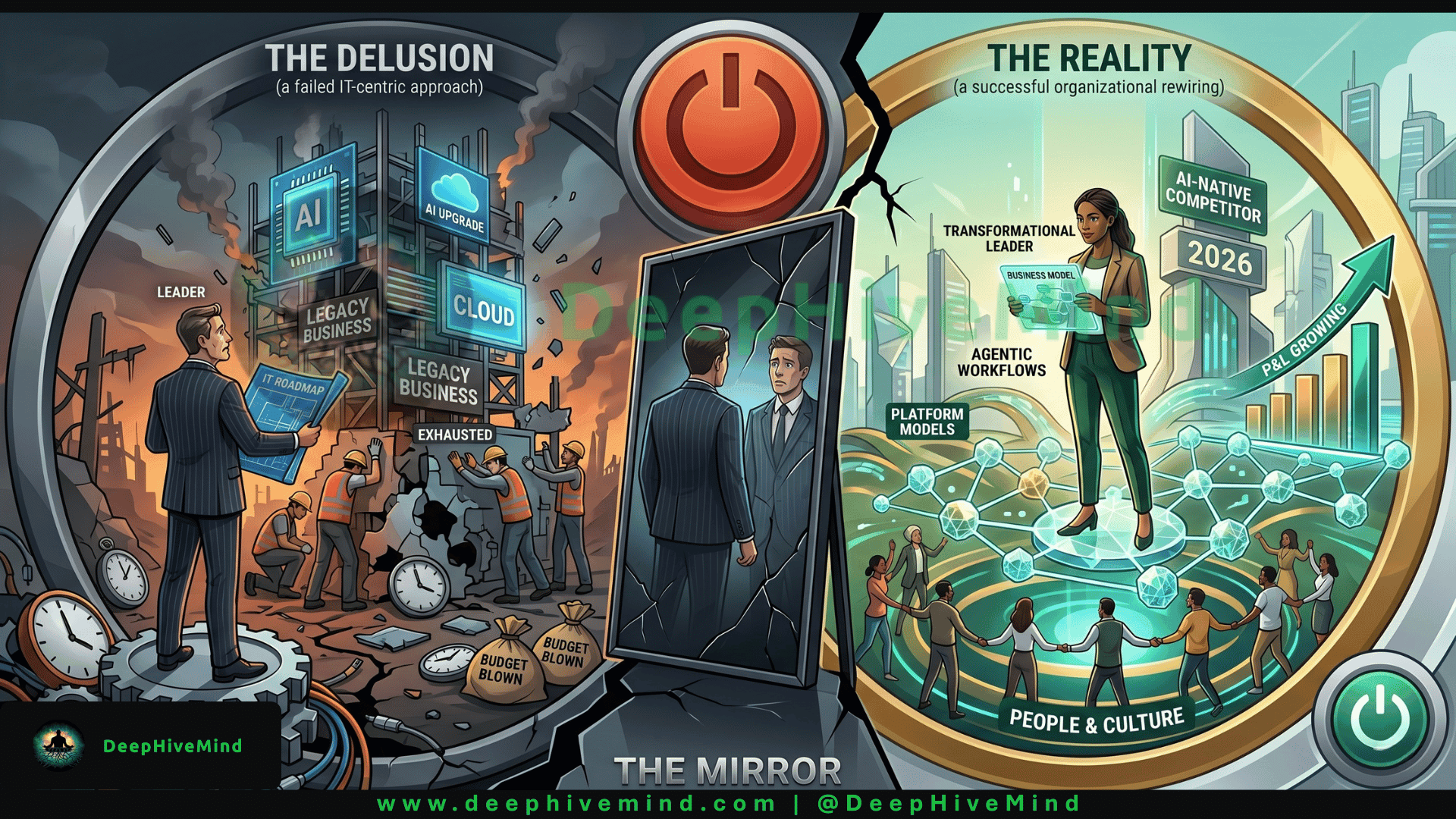

The 2026 AI Strategy Most CXOs Get Wrong (And The 3-Step Fix That Ships Value) Most AI initiatives are just expensive science projects. This is your field manual for turning algorithmic ambition into hard financial return.

Here to share my experience in the realm of expertise:

Cloud Adoption Applied AI, ML & MLOps Microservices 2.0 Digital Portfolio & Product P&L Federated Learning (Adv. AI) IaC, X-Ops, Containers, CaaS (Container-as-a-Service| Kubernetes), CaaS Governance (HELM) Digital Product UX/UI/LCNC (PWA/Micro frontend/ Low Code No Code canvass/ Self Service Interface) Distributed Computing Cybersecurity Industry 4.0 (IIoT, Intelligent Operation Platform, OT & IT) Blockchain in Data Trust Data & Digital Transformation

Right now, somewhere in your organization, a team is “exploring AI use cases” with no link to a P&L line. Somewhere else, a vendor is selling you a tool that solves a problem you don’t have. And your board is growing impatient—not with the technology, but with the absence of measurable impact.

If you’re the executive sponsor for AI in your company, 2026 is not about experimentation. It’s about execution integrity. You don’t need another demo. You need a durable architecture for value creation, built on clear-eyed business logic and human accountability.

I’ve distilled what works into a single operating philosophy. This is not a survey of trends. It is a blunt, opinionated guide for officers who want to move from scattered pilots to a repeatable AI engine.

What a Genuinely Effective AI Strategy Looks Like in 2026 (An Authority View)

Let’s retire the superficial maturity models. A real AI strategy in this epoch isn’t a checklist of capabilities—it’s a coherent stance on three interdependent forces. I call this the Executive Tripod:

Friction Economics (The Pain Point Lens): A winning strategy begins with a forensic identification of the most expensive forms of procedural drag inside your company. Not “what AI can do,” but “what activities are quietly bleeding our margins because they rely on slow, expensive human cognition?” In a distribution business, that might be load optimization. In professional services, it might be the first draft of a complex SOW. The strategy attaches AI to a specific cost or speed lever, never to a vague aspiration.

Evidence Integrity (The Industry Barrier): You can’t reason over garbage. The foundational layer of any AI strategy in 2026 is a single-source-of-truth discipline for the data that powers decisions. If your customer contracts, product specifications, and service histories live in fragmented, unstructured repositories, the most sophisticated models will generate confident nonsense. A credible AI strategy is 80% information curation and 20% model deployment. Period.

Control Transparency (The Governance Mandate): If your AI can make or influence a decision that impacts a customer, an employee, or a regulatory obligation, you must be able to explain exactly how that decision was formed. Not in a white paper—in production. A winning 2026 strategy includes a built-in chain of auditability. It treats explainability as a core requirement, not a feature request from legal.

The Executive Insight: Most companies believe they need an “AI strategy.” In reality, they desperately need a decision-integrity strategy, where AI acts as a sophisticated retrieval and synthesis layer riding on top of meticulously governed knowledge.

How to Construct It From Scratch: The CXO Field Manual

You have legacy infrastructure, cautious stakeholders, and a shareholder letter due in six months. Here is how to build a winning AI capability without betting the farm on any single technology.

Step 1: Hunt for the “Margin Bleed” Task, Not the Hype

Walk away from the innovation theater. Go directly to the operational leaders who run your core processes—claims, underwriting, supply chain, customer onboarding. Ask one question: “What is the one repetitive, data-heavy task that consumes your best people for more than 20 hours a week?”

- The Framework: I use the Cognitive Load Compass. Map tasks along two axes: Decision Complexity (high judgment vs. rule-based) and Information Density (many fragmented sources vs. a single source). The sweet spot for early AI deployment is high information density plus rule-based complexity. That’s where you get immediate, measurable release of human capacity without introducing unacceptable risk.

Step 2: Create a “Gold Standard Task Description” for the Algorithm

Before you talk to any technology provider, write a job description for the AI. I call this the Algorithm Mandate Canvas. It forces you to define, in plain language: what inputs the system receives, what output it must produce, what constraints it must obey, and what “done” looks like.

Example: Not “improve customer service,” but “Given a customer’s last three invoices, their current contract terms, and any open support tickets, generate a draft response to a billing dispute that aligns with our approved resolution templates, flagging anything that requires a supervisor’s escalation.”

Why this matters: You cannot measure performance if you haven’t defined the task with surgical precision. This canvas also immediately exposes whether you have the necessary data.

Step 3: Anchor on Retrieval Pipelines, Not Giant Models

This is the pivotal architectural choice of 2026. The winning approach in the enterprise is not teaching a general-purpose model to memorize your proprietary details—it’s teaching a high-precision retrieval engine to pull the exact paragraphs, policies, or specifications from your own knowledge base and hand them to a lightweight reasoning layer.

The Edge: A compact, fine-tuned language model running inside your own secure environment, with access to a curated index of your company’s documents, will outperform the most expensive public API on any task that requires institutional accuracy. You protect your IP, control your latency, and eliminate hallucinations based on public internet data.

Tangible First Step: Charter a data product team to build a “company knowledge spine”—a curated vector store of your most critical policy documents, product manuals, and decision logs. This asset is the moat.

Step 4: Mandate the “Co-Pilot Review Protocol”

In 2026, full autonomy for consequential business decisions is a governance red flag. I advocate for a deployment pattern I call the Paired Execution Loop. Every AI output is reviewed by a qualified human who can approve, correct, or reject it—and their correction is immediately captured as a feedback signal that tightens the system’s future performance.

Operational Design: A credit analyst drafts a loan memo using an AI assistant. A senior underwriter edits a key risk paragraph. That edit is automatically tagged and reused to refine the AI’s understanding of the bank’s risk appetite. Over time, the human reviews less, but they never fully disconnect.

The Strategic Result: You create a proprietary learning loop. Competitors can buy the same base models. They cannot replicate the thousands of expert corrections that tune your system to your specific market reality.

Real-World Execution: Patterns From Three Sectors

These are disguised but structurally faithful examples drawn from recent engagements, showing the Executive Tripod in action.

- Industrial Equipment Manufacturer (Project-Based Business)

The Challenge: Engineering teams spent 30% of their time re-creating proposals and technical compliance sheets for complex tenders from past material.

The Approach:

Friction Economics: Target the gap between RFP receipt and draft technical response.

Evidence Integrity: Ingest 15 years of winning proposals, engineering change orders, and performance test data into a secure knowledge repository.

Control Transparency: All AI-generated responses include a citation of the source document and a confidence score. A senior engineer must validate any section with a confidence score below 90%.

Outcome: Proposal cycle time dropped by 60%. More importantly, the “win rate” on technically complex bids improved because the AI surfaced relevant past performance data that humans routinely forgot.

- Commercial Property Insurance Carrier

The Challenge: Underwriters were drowning in submission documents, often missing subtle exposure details buried in 200-page loss runs.

The Approach: The strategy focused exclusively on “submission triage.” A specialized AI layer now reads all incoming broker submissions, extracts key risk characteristics, and maps them against the company’s appetite guide. The output is a pre-populated risk evaluation sheet and a recommendation (pursue, decline, needs specialist) that lands in the underwriter’s queue.

The Industry Nuance: The system uses a rules-and-retrieval hybrid. It’s not predicting risk; it’s enforcing underwriting discipline and reducing the time to a go/no-go decision from 4 hours to 22 minutes. The underwriter remains the decision-maker.

- Multi-Specialty Hospital Group (Revenue Cycle)

The Challenge: Denial management required a huge back-office staff to manually cross-reference payer policies and clinical documentation.

The Approach: The group built an “authorization argument builder.” When a high-cost procedure is scheduled, the AI analyzes the patient’s clinical notes against the specific payer’s medical necessity criteria and drafts a short, evidence-backed justification letter for the utilization review team.

Outcome: First-pass authorization rates improved by 18%. The staff shifted from clerical defense to complex appeal strategy, boosting both morale and cash flow.

The Uncomfortable 2026 Truth for My Fellow CXOs

Here is the opinion that will alienate the hype vendors but may save your career.

We spend far too much time talking about model architecture and far too little time talking about organizational antibodies.

Your AI strategy will live or die not on the quality of your algorithms, but on whether your front-line leaders believe the system is there to make them more valuable or to make them redundant. In too many firms, AI is still perceived as a stealth workforce reduction tool, creating a silent, active resistance that pollutes data quality, discourages honest feedback, and ensures that your most expensive initiatives yield nothing but slide decks.

The CXOs who are winning right now are spending as much time on narrative and incentive design as they are on technology architecture. They are publicly redefining roles from “doer of manual tasks” to “governor of AI-driven insights.” They are tying a portion of middle management bonuses to the measurable quality of AI corrections, not just AI adoption metrics.

In 2026, trust infrastructure beats technical infrastructure. If your people don’t trust the tool and don’t trust your intentions, no framework will save you. Build the architecture. But first, build the belief.

A Word of Sincere Appreciation

Thank you for reading through to the end. I know you didn’t have to spend your limited attention on this, and I don’t take that lightly.

If this gave you a lens to pressure-test your own AI roadmap or a phrase to use in your next executive forum, then the time was well spent.

I write regularly about the executive realities of leading through the intelligence transition—no hype, no jargon, just frameworks that work. If that sounds useful, I’d be honored to have you follow me on Harsh Vardhan | LinkedIn — or you can Connect me Harshvardhan.ai , we also have Open Source Community DeepHiveMind or Follow Community on DeepHiveMind | LinkedIn we publish one deep-dive every two weeks

Just tap the Follow button. I’ll do my best to make it worth your time.