Predictive Maintenance Using AI: A Complete Guide for Enterprise Leaders (2026)

Here to share my experience in the realm of expertise:

Cloud Adoption Applied AI, ML & MLOps Microservices 2.0 Digital Portfolio & Product P&L Federated Learning (Adv. AI) IaC, X-Ops, Containers, CaaS (Container-as-a-Service| Kubernetes), CaaS Governance (HELM) Digital Product UX/UI/LCNC (PWA/Micro frontend/ Low Code No Code canvass/ Self Service Interface) Distributed Computing Cybersecurity Industry 4.0 (IIoT, Intelligent Operation Platform, OT & IT) Blockchain in Data Trust Data & Digital Transformation

A strange thing happens in boardrooms when the word “predictive maintenance” comes up. Executives nod along, imagining a seamless future where machines never break, and operations teams silently begin calculating how many decades of clean data they’d need just to start. The gap between the promise and the reality remains a chasm in most enterprises, even in 2026. This guide is for the technology and operations leaders who are done with the hype and want a framework that actually works—one that respects the messiness of factory floors, the politics of reliability teams, and the very real ROI targets sitting on your desk.

What Is AI-Driven Predictive Maintenance? (And What It Definitely Isn’t)

Precision matters here, because the market has muddied the term. Ask ten vendors and you’ll get ten different definitions, most of which conveniently match what they sell.

In its truest sense, AI-driven predictive maintenance (PdM 4.0) is the use of machine learning models trained on historical and real-time equipment data to forecast the remaining useful life of an asset and to prescribe the exact maintenance action needed—before failure, but not so early that you waste good component life. It’s a moving target, not a one-time alarm.

This isn’t condition-based monitoring, which simply alerts you when vibration exceeds a threshold. It isn’t calendar-based preventative work, which swaps out perfectly healthy bearings because the calendar says so. Real predictive AI connects a stream of sensor readings—vibration, thermography, oil debris, current signature—with failure timelines from your CMMS (Computerized Maintenance Management System), learns the subtle degradation patterns no human analyst would catch, and triggers a work order weeks in advance of an outage.

A point of authority I often share with leadership teams: the global standard you’ll want your team to align around is the MIMOSA OSA-CBM (Open System Architecture for Condition-Based Maintenance) framework. If a vendor can’t map their data pipeline to its layers—from sensor acquisition to decision support—walk away. It’s the difference between a point solution that gathers dust and a strategic data architecture you can scale across plants. In 2026, we’re finally seeing enough industrial IoT maturity to implement this properly, but only for companies that treat PdM as a data product, not a software purchase.

How to Implement Predictive Maintenance with AI: A CXO Framework for 2026

Here’s the pain point no one wants to admit publicly: most enterprises have already failed at predictive maintenance once. Internal data science teams built a brilliant proof of concept on one pump, celebrated, and then watched it die in the scale-up phase because nobody solved the organizational blockers. I’ve seen it across automotive, energy, and CPG. The technology works; the operating model usually doesn’t.

So, if you’re the CTO or COO sponsoring this initiative in 2026, use the framework I’ve built from the survivors—those who now run PdM across dozens of sites with real financial impact.

Start With an Asset That Hurts Financially, Not Just Technically : Don’t pick the most instrumented machine; pick the one whose unplanned downtime creates a visible splash in your P&L. A failed packaging line that stops a $200K/hour production batch gets executive attention. That attention funds the next phase. Too many pilots start with a niche, low-impact asset and never earn the right to scale.

Build the Data Foundation Before You Hire ML Talent : Time-series sensor data is worthless without meticulously labeled failure events. I’m going to say this bluntly: your CMMS work order history is likely a disaster. “Pump broken” is not a failure mode. You need a structured taxonomy: bearing inner race spalling, misalignment, impeller cavitation. Spend six months cleaning and tagging data with veteran maintenance technicians before a single algorithm is trained. This is unglamorous work, and it’s why I now mandate that any PdM budget has a dedicated “data hygiene” stream led by a reliability engineer, not a data engineer.

Deploy a Hybrid Edge-Cloud Architecture from Day One : Don’t stream terabytes of raw vibration data to the cloud—it’s expensive and slow. The architecture that works in 2026 runs lightweight inference models on edge gateways, pushing only pre-processed feature vectors and anomaly alerts to a centralized platform. That platform, in turn, retrains models and pushes updates back to the edge. Questions to ask your technical team: Is our model inference latency under 100 milliseconds for safety-critical alerts? Have we tested failover if the cloud connection drops? One oil and gas client reduced cloud costs by 60% after moving to edge-first inference, while actually improving detection speed.

Embed the Alert into the Workflow, Not a Separate Dashboard : If a maintenance planner has to log into a shiny new AI dashboard every morning, your adoption rate will crater. The prediction must manifest as a prioritized work order inside the existing SAP or Maximo system, complete with an estimated failure window, recommended repair actions, and a confidence score. The system should directly trigger a notification to the mobile device of the area technician. Dashboards are for weekly reviews; workflow integration is for daily survival.

Govern the Model Lifecycle Like a Physical Asset : Models drift. A pump you trained on starts processing a slightly denser fluid next quarter, and suddenly your false positive rate spikes. Establish a board-level KPI for “model health”: tracking accuracy, precision, recall, and—crucially—false positive rate per asset class. Schedule model retraining just as you’d schedule a turbine overhaul. I’ve seen too many perfectly good AI implementations degrade into nuisance alarms that maintenance crews simply ignore after six months. At that point, you have a $2 million mute button.

Real-World Examples: From Factory Floors to Oil Rigs

The most instructive examples come from the gritty reality, not polished vendor case studies. Here are three that span authority, industry, and pain point.

Automotive Manufacturing (Pain Point Focus)

A Tier-1 supplier to BMW and Ford had a chronic issue with robotic welding gun tips degrading unpredictably, causing micro-cracks that forced entire body-in-white lines to stop for rework. The company’s internal PdM team initially deployed a black-box neural network that flagged anomalies but gave zero explanation. The maintenance team ignored it. The pivot came when they switched to a first-principles physics model augmented with a lightweight LSTM (Long Short-Term Memory) network. The AI now sends a work order that includes a simple statement: “Tip electrode degradation rate 2.3x baseline; predicted burst in 8 shift-hours; recommended replacement during next 2-hour planned window.” Downtime from weld failures dropped 74% in 18 months. The lesson: you need explainability crafted for the mechanic, not the data scientist.

Offshore Oil & Gas (Industry Focus)

An operator in the North Sea runs unmanned platforms where a helicopter-sent repair crew costs north of $250,000 per unplanned visit. They implemented PdM with AI on gas compressors using acoustic emission sensors and a distributed fiber optic network. What’s remarkable isn’t just the technology—it’s the commercial model. The vendor is compensated on “avoided helicopter call-outs,” sharing in the risk. This forced the AI developer to obsess over false positives, because every false alarm that sent a crew out cost them directly. Predicted failure detection rate sits above 92% with a false positive rate below 1.5%. The insurance underwriters now factor predictive analytics maturity into the premium calculation, creating a double ROI: lower maintenance cost and lower insurance cost.

Global Food & Beverage (Authority in Scaling)

A CPG giant that produces dairy-based products faced a scaling nightmare. A successful pilot on a homogenizer in one German plant couldn’t replicate anywhere else because every plant used different sensor brands and naming conventions. Their breakthrough was creating a “data standardization fabric”—a semantic layer that maps every plant’s sensor to a common descriptive model (ISO 14224 aligned) before any AI touches it. They can now onboard a new plant in three weeks instead of nine months. The CTO told me, “We stopped calling it an AI project and started calling it a data unification project with AI outcomes.” That semantic shift saved the program from political cancellation twice.

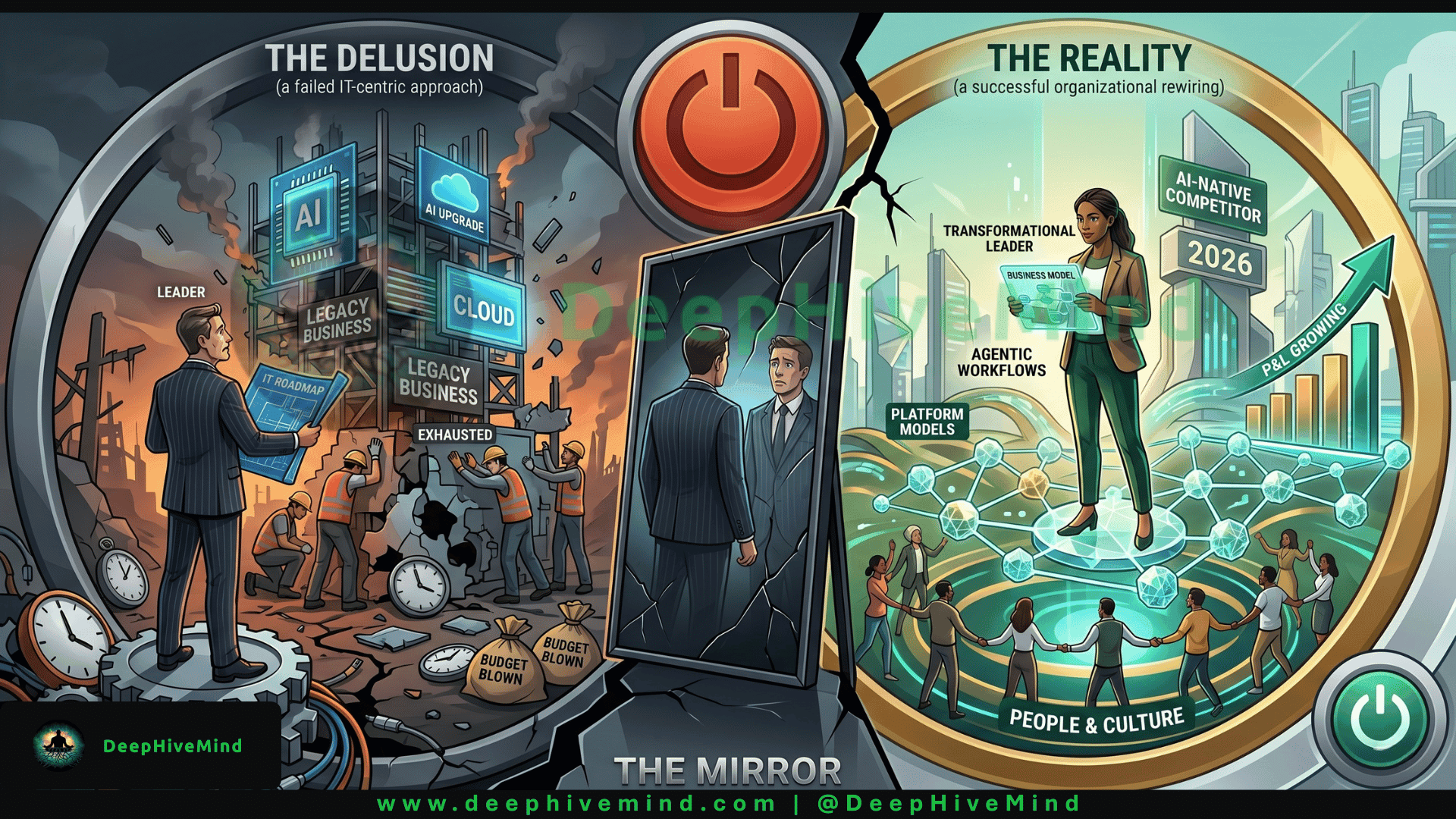

Strong Opinion: AI Is a Mirror, Not a Magic Wand

After a decade of watching predictive maintenance programs rise and fall, I’ve landed on one uncomfortable conviction: AI does not fix a broken maintenance culture. It amplifies the culture you already have.

If your organization reacts to problems after breakdown, tolerates perpetually overridden alarms, and sees maintenance as a cost center to be minimized, then deploying an AI system will just give you very early and very precise warnings that you will then ignore. You’ll have invested millions to learn about a failure two weeks ahead of time and still let the machine crash because no one trusted the new system enough to stop production.

Conversely, in organizations where reliability is genuinely strategic—where the board discusses “asset health” alongside revenue—the exact same AI tool becomes a force multiplier. It gives your best reliability engineers superhuman sight, allowing them to intervene at exactly the right moment and finally justify those hard conversations about taking an asset offline before it fails.

The technology is ready. The sensors, the connectivity, the edge compute, the algorithms—all mature. The frontier in 2026 isn’t technical; it’s behavioral. The companies that win are the ones whose leaders understand that predictive maintenance is a management philosophy supported by AI, not an IT project handed to the analytics department. My advice to CXOs: start by auditing your maintenance culture brutally honestly, and only then buy the algorithms. You might find you need to fix your decision-making loops before you need a neural network.

Thank you for taking the time to read this guide. We know that as an enterprise leader, your reading time is scarce and fought over by dozens of competing narratives. If this perspective resonates with how you’re trying to transform operations—and if you’d like to get our regular deep dives into industrial AI, digital operating models, and leadership strategy—Connect with me on Harsh Vardhan | LinkedIn — or you can visit Harshvardhan.ai, we also have Open Source Community DeepHiveMind or Follow Community on DeepHiveMind | LinkedIn .

We read every reply. Thank you again.

#AIReturnOnInvestment #AIMeansBusiness #NoMorePilots #AIAtScale #ROI2026 #DigitalTransformation #BusinessAI #IndustrialAI #AIAccountability #ShowMeTheROI #ProfitFromAI #StrategicAI #HarshVardhan