Enterprise AI Adoption in 2026: The Complete Guide to a Practical Roadmap That Actually Works

Guide that creates durable competitive advantage rather than a graveyard of unused dashboards.

Here to share my experience in the realm of expertise:

Cloud Adoption Applied AI, ML & MLOps Microservices 2.0 Digital Portfolio & Product P&L Federated Learning (Adv. AI) IaC, X-Ops, Containers, CaaS (Container-as-a-Service| Kubernetes), CaaS Governance (HELM) Digital Product UX/UI/LCNC (PWA/Micro frontend/ Low Code No Code canvass/ Self Service Interface) Distributed Computing Cybersecurity Industry 4.0 (IIoT, Intelligent Operation Platform, OT & IT) Blockchain in Data Trust Data & Digital Transformation

Every boardroom I’ve sat in over the last eighteen months echoes the same refrain: “We’re investing heavily in AI, but we’re not seeing the transformation we expected.” They’ve run the hackathons, stood up centers of excellence, and greenlit dozens of proofs of concept. And yet, the gap between the AI demos and the balance sheet remains stubbornly wide. If that sounds familiar, you’re in the right place. This guide strips away the hype and gives you a field-tested, opinionated roadmap for enterprise AI adoption — the kind that creates durable competitive advantage rather than a graveyard of unused dashboards.

What Is Enterprise AI Adoption (and What It Definitely Is Not)

Enterprise AI adoption isn’t about deploying a chatbot or plugging a large language model into your CRM. Those are features. Adoption, in the sense that matters to a CXO, is the systemic integration of machine intelligence into the core operating fabric of a business — changing how decisions are made, how resources are allocated, and how value is delivered to customers at scale.

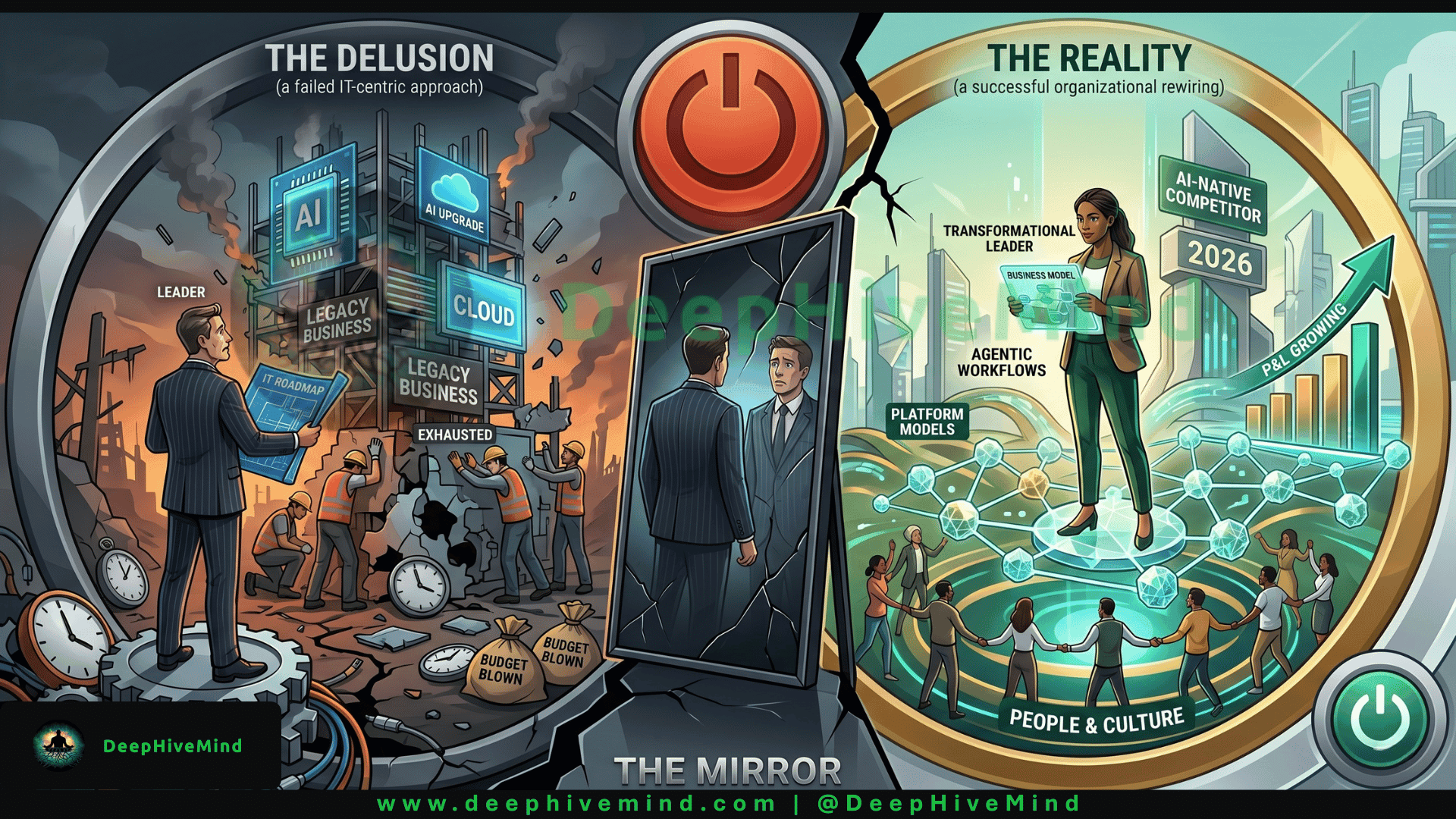

The most persistent mistake I see is treating AI adoption as a technology project. It’s a business model evolution that happens to be executed through software. When a global manufacturer retools an entire supply chain to be self-optimizing, or a financial services firm embeds real-time risk models into every lending decision, they aren’t “installing AI.” They are rewiring decades of process, culture, and governance. That distinction is the single biggest predictor of success.

In 2026, the conversation has thankfully moved beyond “what is generative AI.” The firms pulling ahead understand that adoption means three things simultaneously: technical fluency (the infrastructure and data foundations), organizational readiness (talent, incentive structures, change management), and strategic alignment (picking use cases that defend or extend a moat, not just ones that demo well). Ignore any one of those pillars and your adoption roadmap turns into a very expensive science fair.

How to Build an Enterprise AI Adoption Roadmap That Survives Contact with Reality

Most roadmaps fail because they’re built backwards — they start with the technology and go hunting for a problem. The framework I’ve seen work repeatedly across regulated, asset-heavy, and customer-facing industries looks deceptively simple in outline, but demands rigorous honesty in execution. I call it the Anchor, Prove, Scale, Defend model.

Anchor to a Measurable Business Outcome, Not a Capability : Months before writing a single line of code, the executive team must agree on one to three business metrics that will define success. Not “improve developer productivity” but “reduce the cycle time of claims processing from fourteen days to four hours.” Not “explore personalization” but “increase customer lifetime value by nine percent in the under‑35 segment.” This anchoring does two things: it creates a non-negotiable tripwire that kills vanity projects early, and it forces a painful but productive conversation about data readiness. If you can’t measure the problem today, you won’t measure AI’s impact on it tomorrow.

Prove Value in a High-Pain, High-Trust Envelope : Choose a first use case that is important enough to matter but contained enough to finish in ninety days. I often see CEOs push for customer-facing generative experiences right out of the gate, only to hit regulatory or brand‑safety walls that stall momentum. The smarter play is often an internal decision-support system — something that augments a high‑stakes human process. For example, a pharmaceutical company might start with AI‑assisted adverse event detection in pharmacovigilance case processing, where the return is huge (both in cost and patient safety) and the domain is already governed by strict human-in-the-loop protocols. The goal of the “Prove” phase is not a flashy demo; it’s to win the right to scale by generating a cashable result and a re‑usable data pipeline.

Scale Through a Product Operating Model, Not a Project Portfolio : This is where the road forks between the leaders and the experimenters. The natural reflex is to fund AI as a series of projects with discrete end dates. But AI models are never “finished”; they drift, they learn, they need ongoing feedback loops. Scaling requires a product mindset — a dedicated, cross‑functional team (data engineers, ML engineers, domain experts, and a product manager) that owns a use case long-term and is measured on the business metric, not model accuracy. One industrial conglomerate I studied shifted from a central AI lab prioritizing thirty-eight separate initiatives to three end-to-end product teams aligned to order‑to‑cash, source‑to‑pay, and asset health. Twelve months later, the number of models in production hadn’t increased dramatically, but the amount of value captured had multiplied by six. Less science, more product.

Defend Trust as a First-Class Architectural Requirement : Scale brings scrutiny. By 2026, regulators in the EU, parts of the US, and APAC are no longer just issuing guidance; they are imposing fines. Your roadmap must bake in observability, bias monitoring, explainability, and a “human kill switch” from the start — not as a compliance afterthought. The most compelling framework here is the “Three Lines of Defense” adapted from risk management: the product team owns model health (first line), an independent validation function challenges it (second line), and internal audit provides assurance (third line). Companies that treat trust as a blocker to speed inevitably learn that rebuilding trust after a public failure is slower than any regulation.

Examples: What Adoption Actually Looks Like on the Ground

Abstract frameworks need real fingerprints. Here are two that cut across different industries, each revealing a different speed of adoption and a distinct organizational muscle.

Industry Example: Retail Supply Chain Planning : A large North American retailer with over 2,000 stores and a complex perishable goods network spent 2023–2024 drowning in AI proof-of-concepts. The turning point came when the new Chief Supply Chain Officer killed fifteen concurrent pilots and refocused the entire AI investment around one brutal pain point: markdown optimization for short-shelf-life products. The company built a “decision intelligence” product team that embedded directly with merchandising planners. The model ingested local weather, foot‑traffic signals, inventory aging, and competitive pricing, then issued markdown recommendations with a plain-English rationale. The result wasn’t just a 22% reduction in food waste — it changed the culture. Planners who had been skeptical started demanding deeper integration. Now the same product team is expanding into assortment localization. The lesson: a single, deeply embedded win reshapes an organization faster than a hundred educational town halls.

Industry Example: Financial Services Underwriting : A mid‑tier commercial bank wanted to compete more effectively in small‑business lending without blowing up its risk profile. Rather than attempting to build a “fully automated AI underwriter” (which would have spent years in regulatory limbo), the Chief Risk Officer and CTO co-sponsored a “copilot” approach. They trained a model on five years of loan performance data, third‑party cash‑flow aggregators, and public records to produce a risk summary and a set of probing questions for human underwriters within twenty seconds of application intake. The model wasn’t allowed to decline a loan; it was designed to make the human better. After nine months of parallel running, the pilot segment showed a 17% lift in approval rates for creditworthy small businesses that had previously been declined due to manual processing friction, with no increase in delinquency. The model is now the standard first pass across the entire small‑business portfolio. The board-level insight: AI adoption succeeded because the risk team became the most vocal champion, not the technology team.

Authority Note

These patterns aren’t merely anecdotal. Research from McKinsey’s latest global survey on the state of AI shows that organizations using what I’d call a “product-and-platform” operating model are 2.3 times more likely to see EBIT impact exceeding 20% from their AI investments than those managing AI through centralized centers of excellence alone. Gartner’s 2026 AI strategy planning assumptions further warn that through 2027, more than 60% of enterprises that fail to create an AI governance layer separate from their data governance will experience a front-page AI ethics failure. The data are aligning with what practitioners on the ground already feel: structure and business alignment are the new differentiators, not model size.

Strong Opinion to Close

Here’s the insight I will defend in front of any board: if your AI adoption roadmap is owned by your CIO alone, you have already lost. AI adoption is not a technology upgrade; it is a synchronized change in how you allocate capital, how you manage talent, and how you define accountability. The companies winning in 2026 are the ones where the CEO has become the de facto Chief AI Storyteller — constantly connecting the technology’s capability back to customer pain and competitive positioning — while the CFO runs a parallel value-capture audit at every stage. Treat AI like electricity: you don’t appoint an “electricity officer” and hope the business transforms; you restructure the work itself to exploit the new utility. If you can’t look at your executive incentive structure and see clear, personal consequences for AI’s business outcomes, the roadmap is just a document.

#AI2026 #EnterpriseAI #CTO #Leadership #DigitalTransformation #AIAdoption #ArtificialIntelligence #CIO #TechTrends #Innovation #GenAI2026 #FutureOfAI2026

Thank You for Reading I know your calendar has no empty space, so the fact that you invested your attention here means a great deal. If this roadmap gave you a sharper mental model or a line you’ll use in your next strategy review, I’d be grateful if you shared it with a colleague who’s wrestling with the same challenge. To get more no-hype guides like this one, follow me on Harsh Vardhan | LinkedIn — or you can Connect me Harshvardhan.ai we also have Open Source Community DeepHiveMind or Follow Community on DeepHiveMind | LinkedIn we publish one deep-dive every two weeks, always grounded in the messy reality of building tech inside large organizations, never the glossy vendor slide deck.